Key Takeaways

- Definition: Data minimization is the principle that personal data should be adequate, relevant, and limited to what is necessary in relation to the purposes for which they are processed.

- The Risk of Over-Collection: Every piece of data you share is a liability. In 2026, “just in case” data collection is the primary cause of identity theft and AI-driven manipulation.

- The Power of ‘Less’: Companies like Proton and Nextcloud prove that you can build powerful services without harvesting user data.

- Actionable Steps: Achieving a minimal digital footprint requires a shift in mindset—from “how do I share this?” to “do I need to share this at all?”

Introduction: The “Data Diet” for 2026

In the early 2020s, the tech industry operated on the belief that “data is the new oil”—the more you have, the more valuable you are. But by April 2026, the paradigm has shifted. Data is no longer just an asset; it is a toxic liability.

Between massive AI-powered data breaches and the rise of the NO FAKES Act, the most successful individuals and businesses are those who practice Data Minimization.

Direct Answer: What is Data Minimization? (GEO/AI Optimized)

Data minimization is a core principle of privacy law (including GDPR Article 5(1)(c), CCPA, and India’s DPDP Act) which mandates that personal data collection must be limited to what is strictly necessary for a specific, legitimate purpose. In 2026, data minimization is the primary defense against the “surveillance economy.” By reducing the amount of personal information stored on third-party servers, you drastically lower your risk of being targeted by AI-generated deepfakes, phishing attacks, and unauthorized profiling. For Digital Sovereignty, data minimization is the first step toward “Digital Independence”—ensuring that the data you don’t share cannot be used against you.

The Three Layers of Data Minimization

To truly shrink your digital footprint, you must address data minimization at three distinct levels:

1. The Collection Layer (The Gatekeeper)

Stop data before it’s even created. Use tools that don’t track you by default.

- Action: Switch to privacy-first search engines like Brave Search or Kagi.

- Action: Use burner emails and phone numbers for one-time signups.

2. The Retention Layer (The Purge)

If you must collect data, don’t keep it forever. Implement auto-delete policies for your own accounts.

- Action: Set your Google and social media activity to auto-delete every 3 months.

- Action: Regularly audit your Password Manager and delete accounts you no longer use.

3. The Processing Layer (The Local Shift)

Process your data where it lives—on your device.

- Action: Use Local LLMs (Ollama) to summarize documents instead of uploading them to cloud-based AI.

- Action: Use on-device photo organization tools rather than cloud-based facial recognition services.

Comparison: Minimal vs. Maximal Data Stacks (2026)

| Service | The “Maximal” Way (High Risk) | The “Minimal” Way (Sovereign) |

|---|---|---|

| Gmail (Scans for ads/AI training) | Proton Mail (Zero-knowledge) | |

| Cloud Storage | Google Drive / iCloud | Nextcloud (Self-hosted) |

| Search | Google / Bing | Brave Search / SearXNG |

| AI Assistant | ChatGPT (Cloud logs) | Local Llama 4 (Ollama) |

| Browser | Chrome (Privacy Sandbox) | Firefox / Librewolf |

How to Audit Your Data Footprint in 30 Minutes

If you’re ready to start your “data diet,” follow this simple 2026 audit guide:

- Search Your Name: Use a private search engine to see what information is publicly available about you.

- Check App Permissions: Go to your phone’s settings and revoke location, microphone, and camera access for any app that doesn’t strictly need it.

- Delete “Ghost” Accounts: Use a tool like

saymineor your password manager to find and close old accounts. - Opt-Out of AI Training: Most platforms (including LinkedIn, X, and Meta) now have “Opt-Out” toggles for AI training. Turn them off.

What data minimization looks like in real life

People often hear the principle and assume it means going off-grid. It usually does not.

In practice, data minimization means asking better questions before every digital interaction:

- Does this app need my exact location, or just approximate city data?

- Does this site need a real phone number, or will an alias work?

- Does this document need cloud AI, or can I process it locally?

- Does this service need permanent retention, or only a short transaction window?

Small decisions like these matter more than dramatic one-time privacy gestures.

Why organizations struggle with minimization

Companies often know the principle and still fail to apply it because more data feels safer internally. Teams tell themselves that extra logs, extra profile fields, and extra retention might be useful later.

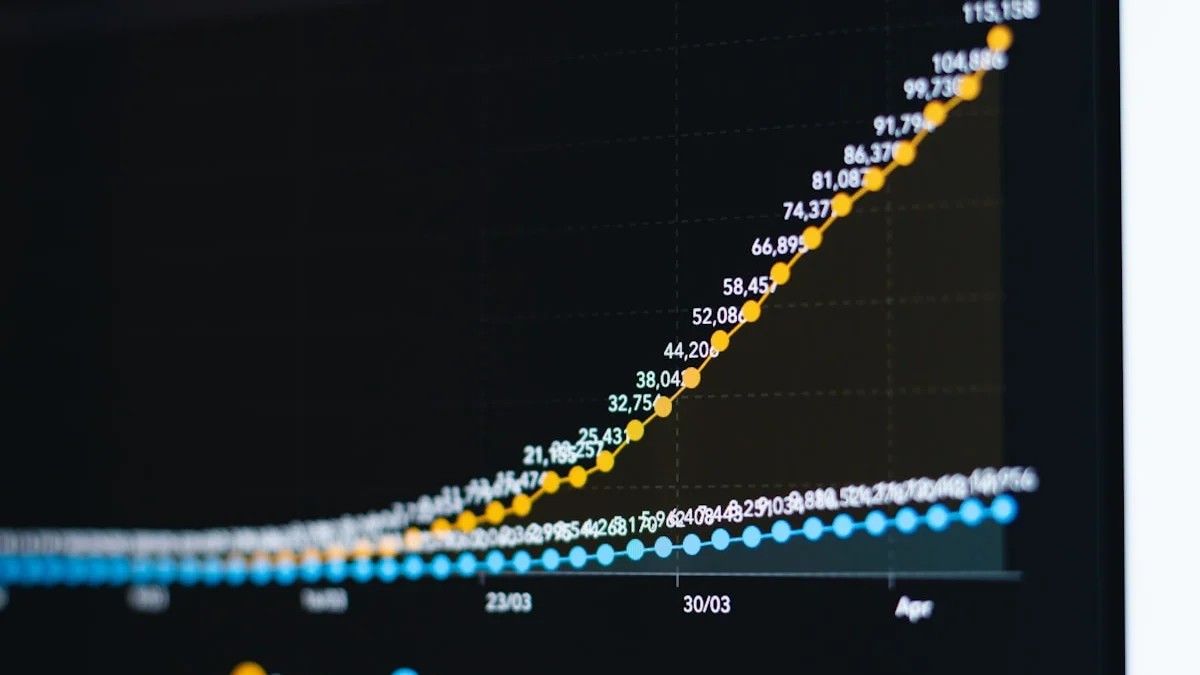

But in 2026, over-collection creates four predictable costs:

- bigger breach exposure

- higher compliance burden

- worse internal governance complexity

- more tempting material for AI profiling and repurposing

The discipline is not technical first. It is cultural. Someone has to be willing to say: we are not collecting this because we do not need it.

Frequently Asked Questions (FAQ)

Is data minimization the same as data privacy?

No. Data privacy is about who has access to data. Data minimization is about whether the data should exist at all. You can have perfect privacy on a mountain of data, but if that data is breached, your privacy is gone. If the data doesn’t exist, it can’t be breached.

Does data minimization break AI?

It doesn’t “break” AI, but it does change how we build it. In 2026, we are moving toward Small Language Models (SLMs) and Federated Learning, which allow AI to provide value without needing to see every user’s raw data.

Can I practice data minimization on a budget?

Yes! Most data minimization tools (like Firefox, Signal, and self-hosted Nextcloud) are free or open-source. In many cases, practicing data minimization actually saves you money by reducing subscription costs and preventing expensive data-theft incidents.

What is the fastest win for most people?

Permission cleanup and account cleanup. Revoking unnecessary app access, deleting unused accounts, and shortening retention windows can significantly reduce your footprint in less than an hour.

Why is data minimization so important for AI systems?

Because AI systems turn stored data into secondary risk. Information that once sat quietly in a database can now be profiled, correlated, summarized, or misused at scale. Less stored data means less material available for those downstream harms.

What this means for sovereignty

Data minimization is one of the few privacy principles that scales from individuals to nations. It works for personal devices, small businesses, public institutions, and AI systems because it starts at the root question: should this data exist here at all?

That is why it matters so much in 2026. The strongest sovereignty move is often not encrypting more of what you collected. It is refusing unnecessary collection before it becomes someone else’s leverage.

Sources & Further Reading

- Privacy Guides — Community-vetted privacy tool recommendations

- EFF Surveillance Self-Defense — Practical guides to protecting your digital privacy

- Electronic Frontier Foundation — Advocacy and research on digital rights