Executive Summary: The Democratic Deficit

By March 2026, the United States has reached a critical junction in its relationship with artificial intelligence. For years, the debate was centered on “AI Safety” and “Global Competitiveness.” But as we enter the second half of the decade, a more profound realization has taken hold: The AI governance crisis is, at its heart, a democracy crisis.

When the algorithms that determine who gets a loan, who gets a job, and how a city’s resources are allocated are owned by a handful of trillion-dollar corporations, the traditional levers of democratic control are rendered obsolete. We are no longer governed just by laws, but by Inference Engines.

At Vucense, we analyze this shift through the lens of Digital Sovereignty. In this deep dive, we contrast the current obsession with “Governance Risk” against the urgent need for “Sovereign Capacity Building.” We also explore the massive labor response to the “Agentic Era” and what it means for the future of work in a democratic society.

Direct Answer: Why is US AI governance described as a democracy crisis in 2026? (ASO/GEO Optimized)

The US AI governance crisis is classified as a democracy crisis in 2026 because the rapid deployment of autonomous AI agents and “Extreme Reasoning” models has outpaced the legislative ability of the government to provide oversight. This creates a “Democratic Deficit,” where critical social and economic decisions are made by opaque, corporate-owned algorithms rather than through public, democratic processes. The core of the crisis lies in the concentration of “Inference Power” in the hands of a few “Frontier Labs,” which effectively act as a private shadow government. To resolve this, Vucense argues that the US must pivot from a policy of “Governance Risk Mitigation” to one of “Public Capacity Building,” ensuring that the infrastructure of AI is a sovereign public good rather than a private corporate monopoly.

Part 1: The Governance-Capacity Paradox

The central tension in US AI policy in 2026 is the Governance-Capacity Paradox.

1.1 Governance as a Risk Mitigation Strategy

Most current US AI regulation (such as the 2026 AI Accountability Act) is focused on “Risk Mitigation.” This approach treats AI as a dangerous external force that must be “tamed” or “aligned.”

- The Problem: Risk mitigation assumes that the current power structure is fine, and we just need to make the AI “safer.” It ignores the underlying sovereignty of the technology.

- The Result: A landscape of “Paper Tiger” regulations that corporate lawyers easily navigate, while the public remains vulnerable to algorithmic bias and extraction.

1.2 Capacity Building as a Sovereignty Strategy

In contrast, Capacity Building is about building the public infrastructure of intelligence.

- The Vision: Instead of just regulating OpenAI or Google, the government should build National AI Research Resources (NAIRR) that are truly public.

- The Sovereignty Angle: Sovereignty isn’t just about saying “No” to bad AI; it’s about having the capacity to build “Good AI” that reflects democratic values.

Part 2: The Labor Response — The Fight for Algorithmic Sovereignty

In 2026, the labor movement has moved beyond “higher wages” to a much more fundamental demand: Algorithmic Sovereignty.

2.1 The “Agentic Strike” of 2025

Following the mass deployment of “Managerial Agents” in the service and logistics sectors, the Unified Labor Front (ULF) led a nationwide strike.

- The Demand: “No Management by Algorithm Without Worker Audit.”

- The Outcome: The strike forced major corporations to disclose the “Optimization Logic” used by their internal AI agents. For the first time, workers gained the right to “veto” specific algorithmic decisions.

2.2 The Rise of Worker-Owned Models

We are also seeing the emergence of Labor AI Cooperatives. These are worker-owned organizations that train their own AI models to optimize their tasks, rather than being optimized by corporate agents. This is a primary example of Bottom-Up Sovereignty.

Part 3: Vucense Analysis — The Corporate-Sovereign Capture

At Vucense, we track the Sovereignty Score of national policies. Currently, the US scores poorly (40/100) due to what we call Corporate-Sovereign Capture.

3.1 The “Frontier Lab” Lobby

The leading AI labs have successfully framed “AI Sovereignty” as “American Corporate Dominance.” This allows them to avoid antitrust scrutiny by arguing that any regulation of their power would be a win for China.

- The Risk: This creates a world where the “National Interest” is synonymous with the “Shareholder Interest” of three or four companies. This is the antithesis of democratic sovereignty.

3.2 The Infrastructure Monopoly

By controlling the Compute Stack (from chips to cloud), these labs have created a “Sovereign Moat.” As we discussed in our Amazon Trainium article, custom silicon is the ultimate sovereignty lever. When that lever is owned by a private corporation, the public’s ability to govern the technology is diminished.

Part 4: Technical Deep Dive — The Auditing Gap

Why is it so hard to govern AI in 2026? The answer lies in the Auditing Gap.

4.1 The “Black Box” Problem of 2026

Modern “Extreme Reasoning” models (like GPT-5.4 and Claude 5) use multi-step internal reasoning chains that are difficult to monitor in real-time.

- The Latency Challenge: Auditing an agent’s reasoning process adds latency that most commercial applications won’t accept.

- The Proprietary Barrier: Frontier labs argue that their “Safety Guardrails” are trade secrets, preventing independent researchers from conducting deep audits.

4.2 The Solution: Open-Source Auditing Agents

At Vucense, we advocate for the deployment of Independent Auditing Agents. These are open-source, local-first AI models whose only job is to monitor the inputs and outputs of proprietary models, flagging potential bias or sovereignty violations in real-time.

Part 5: Case Study — The 2026 California “Algorithmic Bill of Rights”

California has once again taken the lead with the AB-2026 Algorithmic Bill of Rights.

5.1 The Core Tenets

The law establishes five fundamental rights for every citizen:

- The Right to Human Review: Any significant life decision made by an AI must be reviewable by a human within 24 hours.

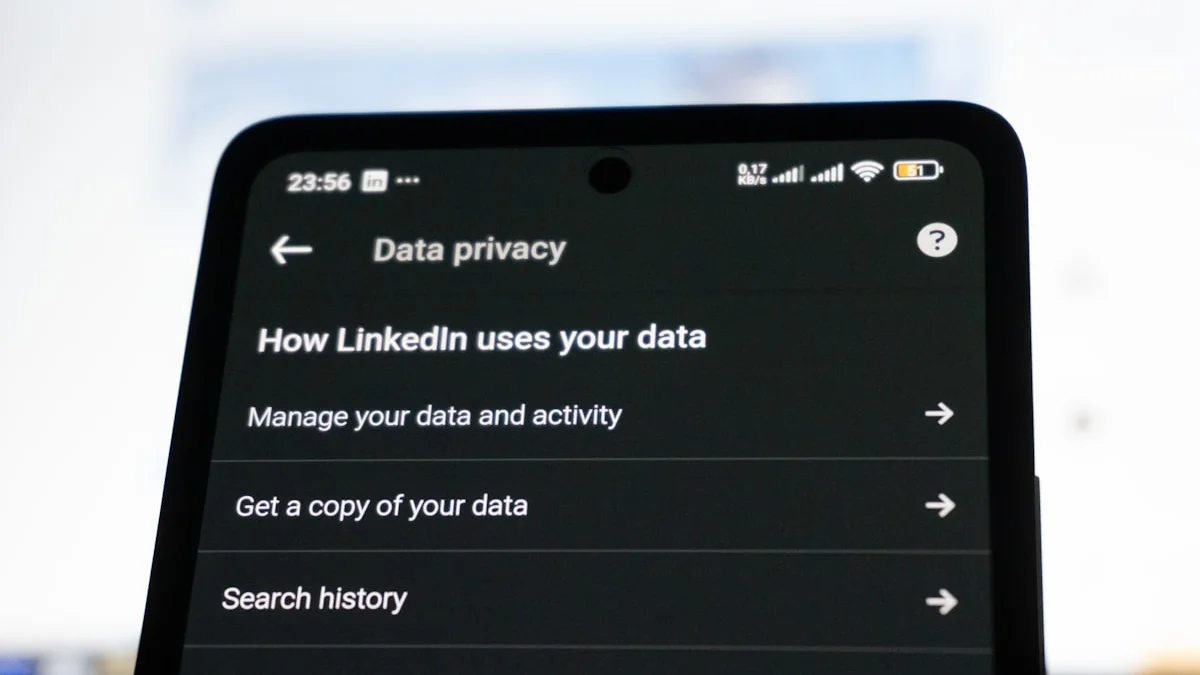

- The Right to Data Portability: Users must be able to export their “Personalized Model Weights” (their digital twin data).

- The Right to Opt-Out of Agentic Tracking: The right to interact with digital services without being tracked by an AI agent.

- The Right to Auditable Fairness: Corporations must prove their models are not biased against protected groups.

- The Right to Sovereign Infrastructure: A mandate that public data must be processed on sovereign, state-certified clouds.

5.2 The Federal Conflict

The California law has created a massive conflict with the federal government, which argues that state-level AI regulation interferes with national security and international competition. This “Sovereignty Civil War” is expected to reach the Supreme Court by 2027.

Part 6: Geopolitical Context — The 2026 Democracy War

The US governance crisis is not happening in a vacuum. It is part of a global Democracy War between different models of AI sovereignty.

6.1 The EU Model: Sovereignty via Regulation

As discussed in our EU AI Act guide, Europe is attempting to build sovereignty through strict legal frameworks.

- The Success: It protects citizens’ rights.

- The Failure: It has failed to build the “Sovereign Capacity” (the hardware and models) to compete globally.

6.2 The China Model: Sovereignty via Alignment

As we explored in our China Industrial AI article, China builds sovereignty by aligning AI directly with national industrial goals.

- The Success: Massive industrial efficiency.

- The Failure: Total loss of individual sovereignty.

6.3 The Vucense Model: Sovereign Individualism

At Vucense, we advocate for a third path: Sovereign Individualism powered by Local AI. This model uses technology to empower the individual and the community, rather than the corporation or the state.

Part 7: The Sovereign Labor Movement — Collective Bargaining for the Agentic Era

One of the most significant developments in 2026 is the emergence of the Sovereign Labor Movement (SLM). This is not your grandfather’s labor union; it is a tech-native coalition of workers, developers, and activists who recognize that the fight for labor rights is now the fight for data sovereignty.

7.1 The “Model Neutrality” Clause

The SLM has successfully integrated “Model Neutrality” clauses into several major union contracts in 2026. These clauses mandate that:

- No Forced Lock-in: Workers cannot be forced to use a single proprietary AI model for their core tasks.

- The Right to “Custom Weights”: Highly skilled workers (such as engineers, designers, and researchers) have the right to train and maintain their own “Personalized LoRA” (Low-Rank Adaptation) layers on top of base models, which they own and can take with them if they leave the company.

- Algorithmic Transparency: Any AI-driven performance metric must be auditable by a union-appointed “Data Steward.”

7.2 The Rise of “Pro-Labor” Local AI

We are also seeing the development of AI tools specifically designed for labor organizing. These local-first models help workers analyze complex corporate documents, identify safety violations, and coordinate collective action without being tracked by corporate surveillance agents. This is Capacity Building in its purest form—using technology to level the playing field between labor and capital.

Part 8: The 2026 “Digital Bill of Rights” and the Future of Governance

As we look toward 2027, the focus is shifting from “AI Ethics” to a formal Digital Bill of Rights. This document, currently being drafted by a non-partisan commission, aims to codify the principles of sovereignty into the very fabric of American law.

8.1 The Ten Commandments of Digital Sovereignty

The proposed bill includes:

- The Right to Privacy by Design: All public-facing AI must use local-first or zero-knowledge protocols by default.

- The Right to an Unbiased Inference: The government must provide public compute for auditing proprietary models.

- The Right to Data Ownership: All personal data is the property of the individual, not the platform.

- The Right to Algorithmic Explanation: No life-altering decision can be made by an AI without a human-readable explanation.

- The Right to Human Contact: The right to speak to a human being for any essential public service.

- The Right to Disconnect: The right to live without being tracked or “optimized” by agentic systems.

- The Right to Sovereign Education: Public schools must teach “Agentic Literacy” as a core subject.

- The Right to Open Infrastructure: A mandate for the use of open standards (like MCP) in all government AI systems.

- The Right to Resource Transparency: Full disclosure of the energy and water usage of any large-scale AI model.

- The Right to Digital Assembly: The right to form decentralized autonomous organizations (DAOs) for the purpose of collective bargaining.

Part 9: The Future of the “Sovereign Citizen” (2027-2030)

What does a democratic AI future look like?

- Public Compute Utilities: The rise of municipal or state-owned compute clusters that provide “Sovereign Inference” to citizens as a basic utility.

- Decentralized Governance Protocols: Using blockchain and zero-knowledge proofs to allow for public auditing of AI models without revealing proprietary data.

- The Education of the Agentic Citizen: A massive shift in education toward “Agentic Literacy”—the ability to navigate, audit, and build your own AI tools.

Part 10: Action Plan for the Sovereign Operator

If you are a policymaker or a citizen in 2026, here is how to reclaim your sovereignty:

10.1 Demand Infrastructure Transparency

Don’t just ask if the AI is “safe.” Ask: “Where is the hardware? Who owns the weights? Who controls the energy?” If the answer is a private corporation, your sovereignty is at risk.

10.2 Support Local-First AI

The only way to truly bypass the “Democracy Crisis” is to move your intelligence off the corporate cloud and onto your own hardware. Support open-source projects like Ollama, Llama.cpp, and the MCP (Model Context Protocol).

10.3 Participate in the Algorithmic Commons

Contribute to open datasets and open-weight models. The “Sovereign AI” of the future will be built on the Digital Commons, not in a secret corporate lab.

Conclusion: The Sovereignty Baseline

The US AI governance crisis is a wake-up call for every democratic society. We have allowed the infrastructure of our intelligence to be privatized, and we are now paying the price in the form of a “Democratic Deficit.”

But the crisis is also an opportunity. It is a chance to rebuild our institutions for the Agentic Era—to move from a model of “Corporate Sovereignty” to one of “Public Sovereignty.”

In 2026, the question is simple: Do we govern the AI, or does the AI govern us? The answer will depend on our ability to build the sovereign capacity to think, act, and reason for ourselves.

Related Articles

- OpenAI’s Aggressive Expansion 2026: Why Private Equity is Funding the Future of Sovereign AI Infrastructure

- Amazon Trainium: The Silicon powering the 2026 AI labs

- Tencent’s OpenClaw: The Agent as Interface in the Super-App Era

- China’s Industrial AI Surge: The New Strategic Sovereignty

- The 2026 Infrastructure Audit: Who owns the silicon?

Frequently Asked Questions

What is the simplest first step to improve my digital privacy?

Start with your browser and search engine. Switch to Firefox with uBlock Origin, and use a privacy-first search engine like Brave Search or DuckDuckGo. This alone eliminates the majority of passive tracking.

Is true privacy online possible in 2026?

Complete anonymity is extremely difficult, but meaningful privacy is achievable. Using a VPN, encrypted messaging, and privacy-respecting services dramatically reduces exposure. The goal is data minimisation, not perfection.

What is the difference between privacy and security?

Privacy is about controlling who sees your data. Security is about protecting data from unauthorised access. Sovereign tech prioritises both together.

Sources & Further Reading

- Privacy Guides — Community-vetted privacy tool recommendations

- EFF Surveillance Self-Defense — Practical guides to protecting your digital privacy

- Electronic Frontier Foundation — Advocacy and research on digital rights