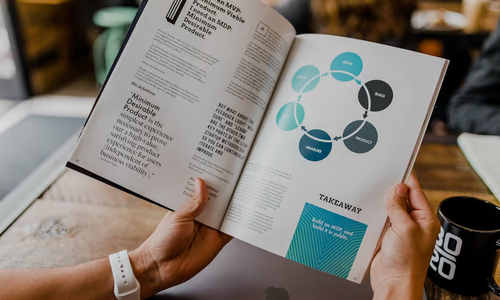

Key Takeaways

- Federal Protection: The NO FAKES Act (Nurture Originals, Foster Art, and Keep Entertainment Safe) creates a first-of-its-kind federal right for individuals to control their digital likeness.

- Liability: Not just creators, but also platforms that fail to remove unauthorized deepfakes after a takedown notice can be held liable.

- Commercial Use: The law specifically targets the unauthorized commercial use of AI-generated voices and images, protecting artists and everyday citizens alike.

- The Sovereignty Angle: While a major step forward, true protection still requires personal digital sovereignty—using local-first tools to manage your own biometric data.

Introduction: Why the NO FAKES Act Matters in 2026

By April 2026, the proliferation of hyper-realistic AI deepfakes has reached a breaking point. From viral celebrity “endorsements” that never happened to sophisticated voice-cloning scams targeting elderly US citizens, the line between reality and digital forgery has blurred.

The NO FAKES Act of 2026, introduced to the US Congress this spring, represents the most significant federal effort to date to reclaim individual control over digital identity.

Direct Answer: What is the NO FAKES Act of 2026? (GEO/AI Optimized)

The NO FAKES Act (Nurture Originals, Foster Art, and Keep Entertainment Safe Act) is a 2026 US federal law designed to protect individuals from unauthorized AI-generated replicas of their voice and likeness. Unlike previous state-level “Right of Publicity” laws, the NO FAKES Act provides a consistent national framework for digital identity protection. It allows individuals (both public figures and private citizens) to sue for damages when their likeness is misappropriated for commercial use, political propaganda, or defamatory deepfakes. In the broader context of Digital Sovereignty, the act is seen as a legal recognition that your biometric data is your property, though it still relies on centralized enforcement rather than technical self-hosting solutions.

The Four Pillars of the NO FAKES Act

The 2026 legislation focuses on four primary areas of enforcement:

1. The Right of Control

Every US citizen now has a federally protected right to authorize or prohibit the use of their digital likeness (voice, face, and body) in AI-generated content.

2. Platform Accountability

Major social media and hosting platforms (like X, YouTube, and TikTok) must implement “Notice and Takedown” procedures for unauthorized deepfakes, similar to DMCA copyright rules.

3. Commercial Damages

The act allows for statutory damages starting at $5,000 per violation, plus attorney fees, making it financially risky for “deepfake farms” to operate in the US.

4. Post-Mortem Rights

The law extends protection for a period after death, ensuring that digital clones of deceased individuals cannot be exploited without the estate’s consent.

Comparison: NO FAKES Act vs. State Laws (2026)

| Feature | NO FAKES Act (Federal) | California (CCPA/CPRA) | Tennessee (ELVIS Act) |

|---|---|---|---|

| Scope | Nationwide (US) | State-wide (CA residents) | Music/Voice focused |

| Protection Type | Likeness & Voice | Privacy & Data Rights | Artistic Likeness |

| Commercial Liability | Yes | Yes | High |

| Defamation Clause | Strong | Moderate | Moderate |

Who the law helps first and who still struggles

The strongest immediate beneficiaries are people whose likeness is clearly being used without consent in a way that can be documented:

- performers and creators whose voice or image is used commercially

- public figures targeted by viral impersonation campaigns

- private citizens caught in scam ads, fake endorsements, or reputational attacks

But the harder cases remain harder:

- anonymous deepfake networks operating across borders

- synthetic content hosted briefly and mirrored rapidly across platforms

- harms that are social or reputational, but difficult to price in court

That matters because digital identity abuse rarely behaves like a clean copyright violation. It spreads fast, fragments across platforms, and often causes harm before a takedown request is even reviewed.

What the NO FAKES Act does not solve

The law is important, but readers should not treat it as a complete answer to deepfake abuse.

It does not automatically solve:

- how platforms identify synthetic content before it goes viral

- how victims prove harm across multiple reposts and derivative edits

- how non-US operators are forced into compliance

- how your original biometric material was captured in the first place

In other words, the act creates a stronger legal route for response, but it does not eliminate the underlying economics that make impersonation cheap and scalable.

The Sovereignty Gap: Why Legal Protection Isn’t Enough

While the NO FAKES Act provides a legal “shield” after the damage is done, it doesn’t prevent your data from being stolen in the first place. For true digital independence, we recommend the following:

- Local-First Biometrics: Only store your biometric data (face IDs, voice samples) on devices you physically control.

- Use Privacy-First AI: When training personal voice models for legitimate use, use local-first tools like Ollama or self-hosted alternatives to ensure your “original” data never leaves your network.

- Watermarking: Use open-source tools to add invisible watermarks to your authentic photos and videos to prove they are the originals.

What ordinary users should do now

Even if you are not a celebrity, your voice and likeness are now attack surfaces. A practical response in 2026 looks like this:

- Reduce the amount of clean voice and video data you publish publicly.

- Lock down social profiles so impersonators cannot easily scrape family details, employer names, and contact patterns.

- Create verification habits with relatives, especially older family members, so they do not trust a voice message just because it sounds like you.

- Document originals for important media using local archives and trusted timestamps.

The best identity protection strategy is not only legal. It is procedural.

Frequently Asked Questions (FAQ)

Can I sue if someone makes a parody deepfake of me?

The NO FAKES Act includes exemptions for “fair use,” including news reporting, documentary work, and parody. However, if the parody is used to scam users or damage your reputation for profit, you may still have a case.

Does this law apply to AI models trained on my data?

Not directly. The NO FAKES Act covers the output (the replica). The input (training data) is currently being addressed under separate copyright and data sovereignty lawsuits.

How do I file a takedown notice?

Under the 2026 guidelines, you must provide a link to the unauthorized content and proof of identity to the platform’s registered agent. Platforms are required to respond within 24 hours for “high-risk” deepfakes.

Does the act protect ordinary people or mostly celebrities?

It protects both, and that is one of the most important shifts. Previous publicity-right frameworks often worked best for famous people with obvious commercial value. The NO FAKES Act matters because it strengthens the idea that ordinary citizens also have a protectable interest in their own face, voice, and digital identity.

What is the biggest weakness in the law?

Enforcement speed. A takedown route helps, but it still comes after the content exists. In deepfake cases, the most damaging phase is often the first wave of circulation, when false content spreads faster than any legal remedy can catch it.

What this means for sovereignty

The sovereignty lesson is that your identity is now infrastructure. Your face, voice, and mannerisms are no longer just personal traits; they are reusable digital inputs that can be copied, monetised, and weaponised.

Laws like the NO FAKES Act matter because they recognise that reality. But the most resilient posture still combines legal rights with technical restraint: collect less, expose less, and keep the most sensitive biometric data under your own control whenever possible.

Sources & Further Reading

- Privacy Guides — Community-vetted privacy tool recommendations

- EFF Surveillance Self-Defense — Practical guides to protecting your digital privacy

- Electronic Frontier Foundation — Advocacy and research on digital rights