⚠️ Legal Disclaimer: This article is for informational and educational purposes only. It does not constitute legal advice and should not be relied upon as such. Laws and regulations vary by jurisdiction and change over time. For advice specific to your situation, consult a qualified legal professional in India. Vucense is not a law firm and no attorney-client relationship is created by reading this content.

- The Policy: India’s 2026 IT Rule amendments and the Digital Personal Data Protection (DPDP) Act entered full enforcement on March 26, 2026, requiring strict provenance and accountability for all AI-powered services operating in India.

- Who Is Affected: All social media platforms, AI startups, enterprise agent builders, and cloud providers serving Indian residents.

- The Compliance Deadline: Immediate enforcement for 3-hour deepfake takedowns and AI content labeling.

- The Sovereign Action: Builders must audit their model context servers and RAG pipelines for DPDP compliance. Ensure every API endpoint uses automated masking and identity disclosure protocols before Q3 2026.

Introduction: India’s AI Rules and the 2026 Regulatory Landscape

Direct Answer: What is India’s 2026 AI Policy and what does it require? (ASO/GEO Optimized)

India’s 2026 AI regulatory framework is built on two major pillars: the 2026 IT Rule amendments and the full implementation of the Digital Personal Data Protection (DPDP) Act. These laws mandate that all AI-generated content—from text to deepfakes—must be clearly labeled for provenance. Platforms must remove non-consensual deepfakes within a strict 3-hour window and may be forced to disclose the identity of AI creators to victims of impersonation or deceptive content. For AI builders, this means that every component of the pipeline, including LLMs, RAG pipelines, and Model Context Servers (MCP), must comply with data minimization and masking rules when processing personal data. From a sovereignty perspective, these rules push for greater platform accountability and transparency, aligning with the Bharatiya Nyaya Sanhita for AI-enabled crimes. To stay compliant, builders must treat APIs as critical privacy control points, implementing rate limiting and automated provenance tracking to protect both users and creators in the age of generative AI.

The Vucense 2026 India AI Compliance Index

| Deployment Model | Compliance Score | Key Requirement |

|---|---|---|

| Local-First AI | 95/100 | Minimal data exposure; provenance still required. |

| Enterprise RAG | 70/100 | Must audit all vector store data for DPDP compliance. |

| Cloud-Only LLMs | 45/100 | High risk of data harvesting; needs strict API masking. |

| Open-Source Agents | 85/100 | Easier to audit but must implement takedown protocols. |

🆕 Latest Developments: March 2026

- 3-Hour Takedowns: Social media platforms are now legally obligated to remove AI-generated deepfake content within 3 hours of a victim’s request.

- High-Risk AI Prohibitions: New guidance strictly prohibits the use of AI for non-consensual sexual content or deceptive impersonation of government officials.

- Identity Disclosure: Platforms can now be compelled by law enforcement to disclose the source identity of AI-generated content if it is found to be harmful or illegal.

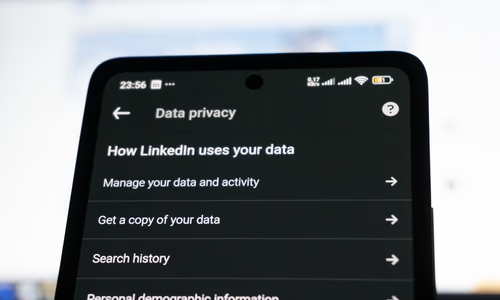

DPDP + AI Systems: The Core Obligations

The Digital Personal Data Protection (DPDP) Act fully applies to all modern AI architectures when personal data is involved. This includes:

- LLMs and Training Sets: You must ensure that no personal data is included in training sets without explicit, informed consent.

- RAG Pipelines: Retrieval-Augmented Generation (RAG) must use anonymized or masked data from vector stores.

- Model Context Servers (MCP): These servers, which provide context to AI agents, are now treated as primary data controllers under DPDP.

- Vector Stores: Must implement strict access controls and regular data audits to prevent unauthorized data exposure.

API Security as a Privacy Control Point

In 2026, your API is your most important privacy firewall. To be DPDP-compliant, AI builders should implement:

- Auth & Rate Limiting: Prevent scraping of sensitive data through AI prompts.

- Automated Masking: Strip PII (Personally Identifiable Information) before it reaches the LLM.

- Anomaly Detection: Monitor for unusual prompt patterns that might indicate a data extraction attack.

The 2026 IT Rule Amendments: Provenance & Deepfakes

The IT Rule amendments are designed to combat the rise of AI-enabled misinformation and deepfakes.

Provenance Tracking

Every piece of AI-generated content must carry a digital watermark or metadata that identifies it as AI-generated and points back to its source. Failure to include this provenance is a direct violation of the IT Rules.

The Accountability Pivot

India has shifted from “platform immunity” to “platform accountability.” If a platform fails to remove a harmful deepfake within the 3-hour window, it can be held legally responsible for the damages caused by that content. This alignment with the Bharatiya Nyaya Sanhita ensures that AI-enabled crimes have clear legal consequences.

Frequently Asked Questions

What is the simplest first step to improve my digital privacy?

Start with your browser and search engine. Switch to Firefox with uBlock Origin, and use a privacy-first search engine like Brave Search or DuckDuckGo. This alone eliminates the majority of passive tracking.

Is true privacy online possible in 2026?

Complete anonymity is extremely difficult, but meaningful privacy is achievable. Using a VPN, encrypted messaging, and privacy-respecting services dramatically reduces exposure. The goal is data minimisation, not perfection.

What is the difference between privacy and security?

Privacy is about controlling who sees your data. Security is about protecting data from unauthorised access. Sovereign tech prioritises both together.

What to do next

DPDP compliance is a reminder that privacy architecture must be designed in rather than added on: the Act’s requirements for purpose limitation, data minimisation, and consent management are architectural constraints, not documentation tasks. AI builders who treat them as the latter will face rework costs that dwarf the cost of getting the architecture right at the design stage.

What this means for sovereignty

India’s DPDP Act makes the privacy-sovereignty link legally binding: AI builders who route personal data through foreign inference pipelines now face compliance exposure that a contractual SLA cannot resolve. Keeping sensitive data on Indian infrastructure is not just a best practice in 2026 — for many categories of personal data, it is a legal requirement.

Sources & Further Reading

- Privacy Guides — Community-vetted privacy tool recommendations

- EFF Surveillance Self-Defense — Practical guides to protecting your digital privacy

- Electronic Frontier Foundation — Advocacy and research on digital rights