Why a hidden 4GB model matters

A 4GB model is not a typical browser download. It is a neural network weight file big enough to run on-device reasoning and assistant features. When Chrome pulls this down without a clear prompt, the product stops feeling like a browser and starts feeling like an AI platform installed on your machine.

This is not just about disk space. It is a new trust boundary. A silent model download expands Chrome’s software stack and makes the browser responsible for hosting a large, private AI asset on your system. That is the moment Chrome stops being a pure rendering engine and starts making privacy decisions for you.

The Vucense view

At Vucense, we are not against on-device AI. We are against surprise installs. A truly sovereign AI experience is one you opt into, understand, and control.

Hidden model downloads do the opposite. They turn Chrome into a default AI host and normalize the idea that large model weights can quietly become part of your browser runtime. Once that happens, the default shifts from “ask first” to “decide for the user first.”

How Chrome is moving from web browser to AI host

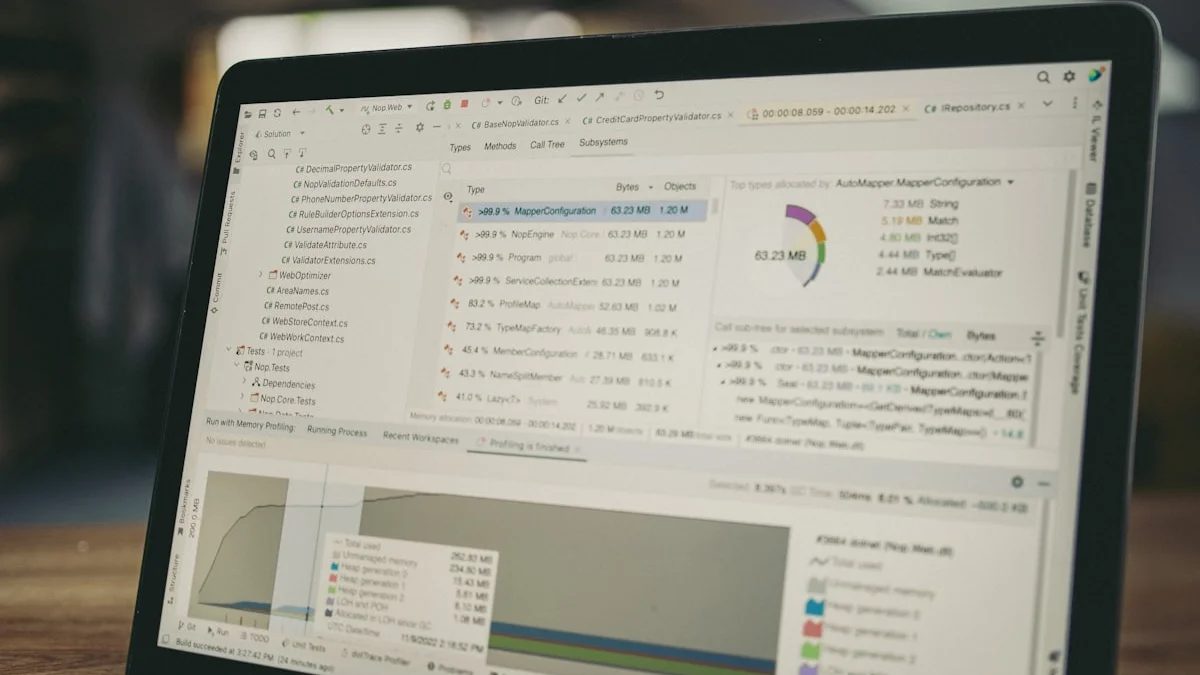

Google has been pushing local AI into its products for months. On desktop, the path is now unmistakable: Chrome is being updated with features that rely on downloaded model weights instead of only cloud calls. Early Dev and Canary reports show those weights are being treated like a cached local asset, which is what turns a browser into a local AI runtime.

Because the model is large, Chrome cannot treat it like a normal JavaScript library or UI asset. Instead, the browser must store it in an opaque cache, potentially under the user profile or system data folder. That makes the download visible to disk management tools, but not always obvious to the average person.

At Vucense, we see this as a broader pattern: Big Tech tends to ship local AI before it ships the controls for consent, storage, and explicit model ownership. That is why we draw a sharp line between “on-device AI you opt into” and “on-device AI your browser installs for you.”

What this means for privacy and sovereignty

Even if the assistant feature is off, the presence of a model file on your machine matters:

- Storage and performance: 4GB of additional files can fill budget-constrained SSDs and impact backup sizes.

- Unexpected updates: Chrome’s auto-update mechanism may refresh the model silently, installing new weights without a separate consent flow.

- Attack surface: Large model files increase the chance that a compromised browser component can abuse or exfiltrate a local asset.

- Decision control: Users should choose if and when to download AI weights; bundling them into browser updates removes that agency.

This is the opposite of on-device sovereignty. Sovereignty is about the user owning the compute stack and explicitly inviting AI weights onto the device, not about a browser quietly depositing them behind the scenes.

Why this matters in 2026

The Chrome case is important because it shows how quickly browser vendors can shift from a web-first product to a local AI platform. In 2026, the question is not whether browsers can run AI; it is whether they do so with explicit user consent and clear controls.

What Google should do instead

A sovereign approach to browser AI would include:

- A clear opt-in prompt before downloading any local AI model.

- A separate model management UI that shows how much space the model takes and where it is stored.

- A single global toggle for disabling all local AI weight downloads and cache refreshes.

- An explicit policy for model retention and automatic deletion when the feature is turned off.

Without those protections, Chrome’s current path looks like an AI upgrade that happens by default and then tries to justify itself after the fact.

What to do now

- Open Chrome’s Privacy and Security settings and see whether any new AI or assistant options have appeared.

- Search your Chrome profile directories for large model files and delete them if you do not want this feature.

- Keep AI and assistant features off until Chrome gives you explicit control over local model downloads.

- If you want a cleaner experience, choose a browser that treats AI models as an optional add-on, not a silent background asset.

How to protect your system today

If this worries you:

- Leave any new Chrome AI or assistant options turned off.

- Check your Chrome profile regularly for unexpected large files.

- Prefer a browser built around minimalism and user control when hidden AI asset downloads are a concern.

- Watch Chrome release notes and privacy audits for changes to how local models are handled.

A better browser experience is one where weight downloads are explicit and AI assets are kept separate from normal browsing data.

Why this matters for 2026 sovereign AI

The event is significant because it reveals a pattern that will define the next phase of consumer AI: companies are eager to ship local model support, but they are not always willing to ask first.

A truly sovereign AI stack requires explicit user consent before any large-weight download. Chrome’s behavior is a reminder that local AI is only sovereign if it is installed under the user’s terms.

Related Articles

- Chrome Zero-Day 2026: 3.5 Billion Users Under Attack

- 10 Best Private Browsers to Replace Chrome in 2026

- 10 Essential Privacy Extensions for Your Browser in 2026

Sources & Further Reading

- Google Chrome AI feature documentation and release notes

- Early reports from Chrome Dev and Canary builds on local model caching behavior

- Vucense analysis of local model consent, user control, and browser sovereignty

- Open source on-device AI project best practices for explicit model downloads

Direct answer: is Chrome secretly downloading an AI model? Yes. Chrome is moving toward a browser architecture where a local AI model can be downloaded and cached without a separate user opt-in, which makes the browser less transparent and less owner-friendly. The safer architecture for sovereign users is an explicitly opt-in local AI download, not a hidden background installation.