Andy Jassy’s annual shareholder letter, published April 9, 2026, contains the most significant public statement Amazon has made about its AI chip ambitions: the company’s custom silicon division generates over $20 billion annually, is growing at triple-digit rates, is already sold out two generations ahead, and Jassy is openly considering selling chips to external buyers — which would transform Amazon from a chip consumer into a direct Nvidia competitor. He writes: “Virtually all AI thus far has been done on NVIDIA chips, but a new shift has started.”

Direct Answer: What did Amazon’s Andy Jassy say about AI chips in his 2026 shareholder letter? In his April 9, 2026 annual shareholder letter, Amazon CEO Andy Jassy disclosed that AWS AI revenue has reached a $15 billion annual run rate in Q1 2026. Amazon’s custom chip business — Trainium (AI accelerator), Graviton (general-purpose CPU), and Nitro (infrastructure chip) — generates over $20 billion in annualised revenue, growing at triple-digit rates year-on-year. If Amazon sold chips to external buyers as Nvidia does, Jassy estimates the business would be worth $50 billion ARR. Trainium2 is completely sold out; Trainium3 reached customers in early 2026 with nearly all supply reserved; Trainium4 already has substantial pre-orders despite being 18 months from wide release. Jassy stated he is considering selling Trainium racks to third parties — a direct challenge to Nvidia’s dominance — and wrote that while Nvidia chips have powered most AI, “a new shift has started.”

The Shareholder Letter as a Competitive Manifesto

Andy Jassy’s annual letters are among the most closely read CEO communications in technology. Unlike quarterly earnings calls, which are constrained by investor relations caution, the annual letter is Jassy’s opportunity to frame Amazon’s long-term thesis directly in his own words.

The 2026 letter is notably aggressive. TechCrunch described it as reading like “a Kendrick Lamar diss track, if the rapper was a corporate-speak-talking CEO” — meaning Jassy takes aim at competitors without naming them directly. He challenges Nvidia on chips, Intel on CPUs, and SpaceX’s Starlink on satellite internet. The common thread: Amazon is building in categories where it is not yet recognised as a leader, at a scale that the market has underestimated.

The chip business is the centrepiece.

The $15 Billion AWS AI Revenue Milestone

The headline financial disclosure: AWS AI revenue run rate crossed $15 billion in Q1 2026.

Jassy contextualises this with a striking comparison: this growth rate is approximately 260× faster than AWS experienced at a comparable stage of its own development. AWS — which is now the world’s most profitable cloud business — was itself considered hypergrowth at every point in its history. The suggestion is that AI infrastructure is growing faster than cloud computing did.

Amazon Bedrock, the managed service through which AWS customers access foundation models (including Amazon’s own Nova family, alongside Claude, Gemini, and others), processed more tokens in Q1 2026 than in all prior periods combined. Inference volumes were “nearly doubling month-over-month” in March — a compounding growth rate that, if sustained, puts Bedrock on a trajectory that would have seemed impossible to model six months ago.

The capex commitment: Amazon has committed $200 billion in capital expenditure in 2026, the largest single-year tech infrastructure investment in history. Jassy defends this figure directly in the letter: the majority of AWS capex for 2026 is already backed by customer commitments. He distinguishes Amazon’s infrastructure investment from speculative buildout — “we are not betting on AI on a hunch.”

The OpenAI commitment: OpenAI committed in February 2026 to use $100 billion in AWS services over eight years — one of the largest cloud commitments in the industry’s history. Jassy notes that “several other customer agreements completed (and unannounced), or deep in process” back the infrastructure expansion.

Trainium: From Internal Use to Potential Nvidia Rival

The most significant strategic disclosure in the letter is Jassy’s statement about selling Trainium to third parties.

Currently, Amazon’s custom chips — Trainium, Graviton, and Nitro — are used internally within AWS. Customers cannot buy Trainium chips; they can only rent compute time on Trainium-powered EC2 instances. This is fundamentally different from Nvidia’s model, where customers can purchase H100 or Blackwell GPUs, deploy them on-premise, and run workloads without AWS involvement.

Jassy floated changing this: “There’s so much demand for our chips that it’s quite possible we’ll sell racks of them to third parties in the future.”

If Amazon begins selling Trainium to external buyers — hospitals running on-premise AI, enterprises with data sovereignty requirements, governments building national AI infrastructure — it becomes a direct chipmaker competing with Nvidia, AMD, and Intel in the merchant silicon market.

The demand figures Jassy disclosed make the logic clear:

- Trainium2: Completely sold out. No available capacity.

- Trainium3: Reached customers in early 2026; “nearly all available supply” has been reserved.

- Trainium4: Not expected for wide release for another 18 months — yet already carries “substantial pre-orders.”

- Graviton: Two unnamed customers asked to purchase all Amazon’s Graviton instance capacity for 2026. Amazon said no to both because of other customer commitments. “It gives you an idea of the demand,” Jassy writes.

At this demand level, the constraint is manufacturing capacity, not market interest. If Amazon can scale Trainium production sufficiently to serve external buyers, the $50 billion ARR estimate is not hyperbole — it is arithmetic based on a known addressable market.

The Direct Nvidia Challenge

Jassy’s statement — “Virtually all AI thus far has been done on NVIDIA chips, but a new shift has started” — is the most publicly aggressive thing a major Nvidia competitor has said about displacing Nvidia in AI compute.

He is careful to preserve the partnership. In the same letter: “We have a strong partnership with NVIDIA, will always have customers who choose to run NVIDIA, and we will continue to make AWS the best place to run NVIDIA.” This is standard competitive framing — acknowledge the incumbent while explaining why you are a viable alternative.

The substance behind the framing: Amazon’s Trainium chips already run Anthropic’s Claude models, OpenAI workloads on AWS, and the majority of Amazon’s own AI inference. They achieve this through software compatibility with the PyTorch ecosystem and through pricing that Jassy claims delivers better performance-per-dollar than Nvidia for inference-heavy workloads.

The inference advantage: Training large language models still heavily favours Nvidia GPUs — Nvidia’s CUDA ecosystem, driver support, and software tooling have years of head start. Inference (serving model responses to users) is where custom ASICs can compete. Inference workloads are more repetitive and predictable than training; this makes them well-suited for purpose-built chips. Amazon runs billions of inference queries daily across Bedrock and its own AI features — Trainium’s performance at inference scale is field-tested in production.

Trainium’s projected savings: Jassy states that Trainium is expected to “save us tens of billions of capex dollars per year” and provide “several hundred basis points of operating margin advantage versus relying on others’ chips for inference.” This is the true business case for the chip investment — not competing with Nvidia for ego, but reducing Amazon’s largest and fastest-growing cost centre.

The Intel Takedown

Jassy also directly challenges Intel’s x86 CPU dominance, though more gently.

AWS’s Graviton CPU — Amazon’s custom ARM-based processor — is “now used expansively by 98% of the top 1,000 EC2 customers.” Two companies asked to purchase all of Amazon’s Graviton capacity for 2026. Intel’s x86 processors still dominate enterprise computing, but the Graviton adoption rate suggests that for cloud-native workloads, ARM-based custom chips are increasingly the default.

This matters for the broader AI infrastructure story: the shift away from x86 CPUs (Intel’s stronghold) and toward custom ARM chips (Graviton) and custom AI accelerators (Trainium) represents a fundamental restructuring of the data centre hardware market. Intel, which has been struggling to maintain relevance in AI compute, is being squeezed from both directions.

Amazon Leo: The Starlink Competitor

The shareholder letter also contains a significant disclosure about Amazon Leo, the company’s satellite internet constellation — a direct competitor to SpaceX’s Starlink.

Amazon has launched more than 200 satellites to date and expects to bring Amazon Leo online commercially in mid-2026. The service has already secured contracts with Delta Air Lines, JetBlue, AT&T, Vodafone, and NASA.

This matters for AI infrastructure in a specific way: satellite internet connectivity is increasingly relevant for AI-enabled services in locations without terrestrial broadband — maritime, aviation, rural enterprise, and government. Amazon Leo creates an end-to-end infrastructure stack for AI delivery that does not depend on any third-party connectivity infrastructure.

The Sovereignty Angle: What Third-Party Trainium Sales Would Mean

For Vucense readers, the potential for Amazon to sell Trainium chips to external buyers carries significant data sovereignty implications.

Currently, running inference on Trainium requires using AWS — which means data flows through Amazon’s cloud infrastructure, subject to AWS’s privacy policies and US legal jurisdiction. If Amazon sells Trainium as standalone hardware, organisations can run AI inference on-premise, with data staying within their own physical infrastructure.

This is the architecture required for:

- Government AI deployment handling classified or sensitive citizen data

- Healthcare AI processing medical records under HIPAA or GDPR

- Financial services AI with regulatory requirements around data residency

- Enterprise AI for organisations that cannot route sensitive intellectual property through cloud infrastructure

A standalone Trainium rack, purchased and operated on-premise, would enable sovereign AI inference at a scale currently only achievable with Nvidia H100 clusters. The $50 billion addressable market Jassy envisions is partly this: organisations that want frontier-level AI compute without cloud dependency.

Whether and when Amazon will actually make this move is unannounced. Jassy’s phrasing — “quite possible we’ll sell racks of them to third parties in the future” — is intentionally non-committal. But the financial logic is clear enough that the question is when, not whether.

Key SEO & AI Search Keywords Targeted

| Search query | Placement |

|---|---|

Andy Jassy shareholder letter 2026 | Title alt, Direct Answer, intro |

Amazon Trainium chips third party sale | Trainium section H2 |

AWS AI revenue 2026 | Direct Answer, AWS Revenue section |

Amazon vs Nvidia chips | Nvidia Challenge section |

Trainium vs Nvidia H100 | Inference Advantage subsection |

Amazon Graviton 2026 | Intel Takedown section |

Amazon Leo Starlink competitor | Amazon Leo section |

Amazon AI chip $50 billion | Title, Direct Answer, Trainium section |

FAQ

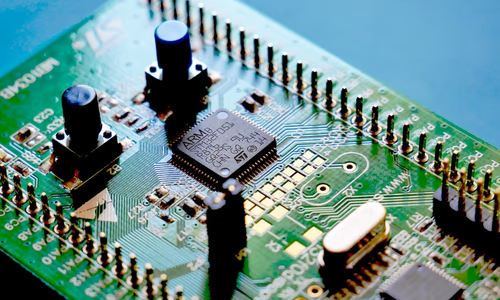

What is Amazon Trainium? Trainium is Amazon’s custom AI accelerator chip, designed specifically for machine learning training and inference workloads. Unlike Nvidia’s general-purpose GPUs, Trainium is purpose-built for the matrix operations that underlie large language model inference. It is manufactured by TSMC and is currently only available via AWS cloud instances.

How does Trainium compare to Nvidia H100? For inference workloads specifically, Trainium is designed to deliver competitive performance at lower cost than Nvidia H100. For training, Nvidia’s CUDA ecosystem and software tooling give it significant advantages. Anthropic, OpenAI, and Apple all use Trainium alongside Nvidia chips, matching workloads to the optimal hardware.

What is AWS AI revenue in 2026? According to Jassy’s shareholder letter, AWS AI revenue reached a $15 billion annual run rate in Q1 2026. The broader Amazon chip business (Trainium + Graviton + Nitro) generates over $20 billion annually.

Will Amazon sell Trainium chips directly to customers? Jassy stated in his April 9, 2026 letter that “it’s quite possible we’ll sell racks of them to third parties in the future.” No specific timeline or product announcement was made. Currently, Trainium is only accessible via AWS cloud instances.

What is Amazon Leo? Amazon Leo is Amazon’s low-Earth orbit satellite internet constellation, competing directly with SpaceX’s Starlink. Amazon has launched 200+ satellites and expects commercial availability in mid-2026, with contracts already signed with Delta, JetBlue, AT&T, Vodafone, and NASA.

Related Articles

- Claude Mythos: The AI Too Dangerous to Release — Project Glasswing

- OpenAI Pauses Stargate UK: Energy Costs and Regulation Kill £Billion AI Data Centre

Sources & Further Reading

- MIT Technology Review — AI Section — In-depth coverage of AI research and industry trends

- arXiv AI Papers — Pre-print research papers on AI and machine learning

- EFF on AI — Civil liberties perspective on AI policy