Anthropic’s Model Context Protocol crossed 97 million installs in March 2026. Six months earlier, it was an experimental standard that only Claude supported. Today every major AI provider ships MCP-compatible tooling, and the protocol has become the default mechanism by which AI agents connect to external tools, APIs, and data sources. This is what a protocol winning an industry standard battle looks like in real time — and it matters enormously for anyone building software in 2026.

Direct Answer: What is MCP and why does 97 million installs matter? Model Context Protocol (MCP) is an open standard created by Anthropic that defines how AI agents communicate with external tools, data sources, and APIs. Instead of each AI provider implementing custom integrations for every tool (a separate integration for Slack, another for GitHub, another for Postgres), MCP provides a universal interface: build an MCP server once, and any MCP-compatible AI agent can use it. Crossing 97 million installs in March 2026 signals that MCP has won the AI tool integration standard battle — OpenAI, Google DeepMind, Microsoft, and Meta all now ship MCP-compatible tooling. For developers, this means the ecosystem of MCP servers (covering databases, APIs, code tools, productivity apps) is now accessible from any major AI agent, not just Claude.

What MCP Actually Is

MCP is a communication protocol — a set of rules governing how AI agents talk to external systems.

The problem it solves: every AI agent needs to connect to the real world — read files, query databases, call APIs, search the web, execute code. Before MCP, every AI provider implemented these connections differently. An integration built for Claude’s tool use API could not work with OpenAI’s function calling. A tool built for LangChain might not work with LlamaIndex. Every AI framework had its own integration layer, and connecting a new tool to multiple AI agents meant writing multiple separate integrations.

MCP’s solution: A standard client-server interface where:

- MCP clients are AI agents (Claude, GPT, Gemini, open-source models) that want to use external tools

- MCP servers are integrations that expose tools, resources, and prompts to those agents

- Any MCP client can connect to any MCP server — one integration works everywhere

The protocol defines three primitive types that servers can expose:

Tools — actions the agent can take. Execute a database query, send a Slack message, run a terminal command, call an API endpoint. Tools have defined input schemas and return structured outputs.

Resources — data the agent can read. A file system, a database, a knowledge base, a code repository. Resources expose structured data that the agent can include in its context.

Prompts — reusable prompt templates that agents can invoke. Pre-built task definitions that standardise how certain types of requests are handled.

Why MCP Won Over Alternatives

By March 2026, several competing approaches existed for AI tool integration. MCP won for three reasons:

Anthropic open-sourced it immediately. MCP was released under the MIT licence from day one. Any company could implement it without licensing fees, without Anthropic’s involvement, and without concern about future licensing changes. This removed the primary barrier to adoption for competitors.

The transport layer is simple and standard. MCP uses JSON-RPC over stdio or HTTP with Server-Sent Events. These are universally supported transports — any language, any runtime, any infrastructure can implement MCP servers and clients. No proprietary runtime required.

Anthropic built the ecosystem first. By releasing MCP with reference implementations, an SDK, and early server libraries for common integrations (filesystem, Postgres, Slack, GitHub, web search), Anthropic seeded the ecosystem before competitors had reason to build alternatives. By the time OpenAI evaluated whether to adopt or compete with MCP, there were already thousands of community-built MCP servers. Competing meant fragmentation; adopting meant joining a working ecosystem.

The network effect compounded. Each new MCP server makes the protocol more valuable for every AI agent that supports it. Each new AI agent that supports MCP makes every existing MCP server more valuable for its developer. This bidirectional network effect accelerated adoption faster than any single company decision.

The Ecosystem at 97 Million Installs

The install count includes MCP server and client libraries across all supported languages. The ecosystem as of March 2026:

Official MCP servers (Anthropic-maintained):

- Filesystem — read and write local files

- PostgreSQL — query and modify Postgres databases

- Slack — read channels, post messages, search history

- GitHub — repository access, PR management, code search

- Web search — real-time web queries

- Memory — persistent key-value storage for agents

- Puppeteer — browser automation and web scraping

Major third-party MCP servers:

- Linear, Asana, Jira — project management

- Notion — workspace and database access

- Figma — design file access

- Stripe — payment processing queries

- Salesforce — CRM data access

- AWS, GCP, Azure — cloud infrastructure management

- Supabase, PlanetScale — database management

- VS Code, JetBrains — IDE integration for coding agents

MCP-compatible AI clients:

- Claude (claude.ai, API, Claude Code, Claude Desktop)

- OpenAI GPT-4.1 and GPT-5.4 (via tool use)

- Google Gemini 3 (via Vertex AI tool use)

- Microsoft Copilot (via Azure AI tooling)

- Meta LLaMA-based agents (via community implementations)

- Local models via Ollama (community MCP clients)

What This Means for Developers

The practical implications of MCP becoming the industry standard are significant for anyone building AI-powered applications.

Build once, use everywhere. If you build an MCP server exposing your application’s data and actions to AI agents, every MCP-compatible AI can use it. A customer support tool that builds an MCP server for its knowledge base does not need to maintain separate integrations for Claude, GPT, Gemini, and whatever model the enterprise wants to use next year.

Marketplace economics. The MCP ecosystem is developing marketplace dynamics — a large number of servers creates value for AI platform providers, which attracts more developer investment in MCP servers, which creates more value. The 97 million install count is a proxy for this flywheel gaining momentum.

Reduced vendor lock-in. Before MCP, choosing an AI provider often meant being tied to their integration ecosystem. With MCP, switching from Claude to GPT (or vice versa) does not require rebuilding tool integrations — the MCP servers remain compatible. This is genuinely good for developers and enterprises.

The agentic AI prerequisite. Claude Managed Agents, OpenAI Agents SDK, and Google Agent Builder all depend on tool access to be useful. MCP provides the standardised tool layer that makes agentic workflows composable. Without a standard protocol, every agentic application is a bespoke integration project.

The Sovereignty Angle

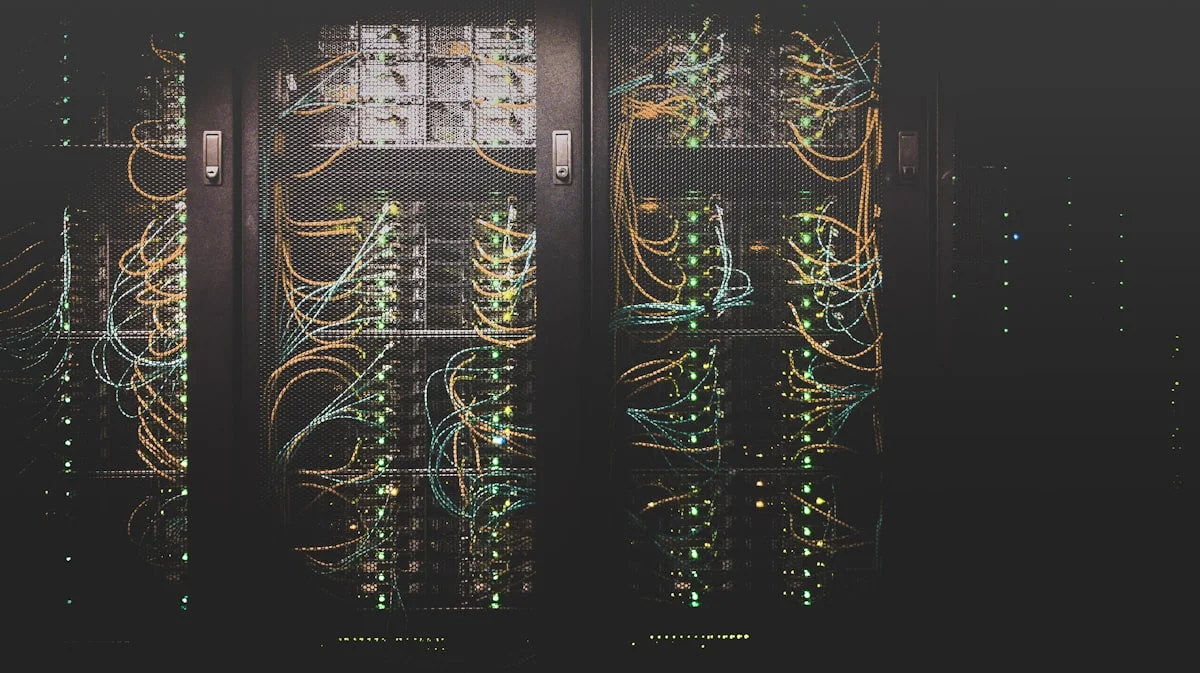

For privacy-focused developers, MCP has a dimension worth considering: MCP servers run on your infrastructure.

When an AI agent connects to an MCP server, the server runs wherever you deploy it — your local machine, your VPS, your company’s private cloud. The data that the MCP server accesses never needs to leave your infrastructure. The AI model (Claude, GPT) receives only the tool outputs that the MCP server returns — not the raw data it queried.

This architecture enables a specific privacy pattern: local MCP servers with cloud AI models. Your MCP server runs locally and has access to your private database. The AI model (Claude) makes tool calls to the MCP server. The server queries the database, filters sensitive data, and returns only the relevant result to the model. The model never directly touches your database.

For organisations that want AI assistance with their private data but cannot send that data to external AI APIs, this architecture is the practical solution. The Vucense practical guide: run MCP servers locally via [Ollama + local MCP client] for full sovereignty, or run MCP servers on your private infrastructure with Claude API calls for a good privacy-quality balance.

FAQ

What is MCP (Model Context Protocol)? MCP is an open standard created by Anthropic that defines how AI agents connect to external tools, data sources, and APIs. It provides a universal interface — any MCP-compatible AI agent can use any MCP server, regardless of which AI company built either component. Released in late 2025 under the MIT licence.

Why did MCP reach 97 million installs so quickly? Three factors: Anthropic open-sourced it from day one (no licensing barriers), all major AI providers adopted it rather than building competing standards, and the ecosystem of pre-built MCP servers created immediate practical value. The bidirectional network effect — more servers make agents more valuable, more agents make servers more valuable — accelerated adoption.

Do I need to use Claude to use MCP? No. MCP is an open protocol. OpenAI, Google, Microsoft, and Meta all support MCP-compatible tooling. Local models via Ollama can also use MCP through community client implementations. Claude is where MCP started, but it is no longer Claude-specific.

How do I build an MCP server? Anthropic provides official SDKs in Python and TypeScript. The minimum implementation defines your tools (what actions the agent can take), their input schemas, and their execution logic. A simple MCP server can be built in under 50 lines of Python. The Anthropic MCP documentation at modelcontextprotocol.io has quickstart guides and reference implementations.

What is the difference between MCP and function calling? Function calling (OpenAI’s term) / tool use (Anthropic’s term) is the mechanism by which an AI model requests that a tool be executed. MCP is the standardised protocol that sits below this — defining how the tool is exposed, discovered, and invoked across different AI providers. MCP uses function calling/tool use as its execution mechanism but adds standardised discovery, transport, and schema definition on top.

Related Articles

- Claude Managed Agents: Anthropic Launches Infrastructure for Enterprise AI Agents

- Claude Mythos: The AI Too Dangerous to Release — Project Glasswing

- Anthropic Overtakes OpenAI: $30B ARR and the IPO Race Explained

- Local LLM Hosting Cost Comparison 2026: Self-Host vs Cloud API

- Cursor AI vs GitHub Copilot vs Claude Code: Enterprise Benchmark 2026

Sources & Further Reading

- MIT Technology Review — AI Section — In-depth coverage of AI research and industry trends

- arXiv AI Papers — Pre-print research papers on AI and machine learning

- EFF on AI — Civil liberties perspective on AI policy